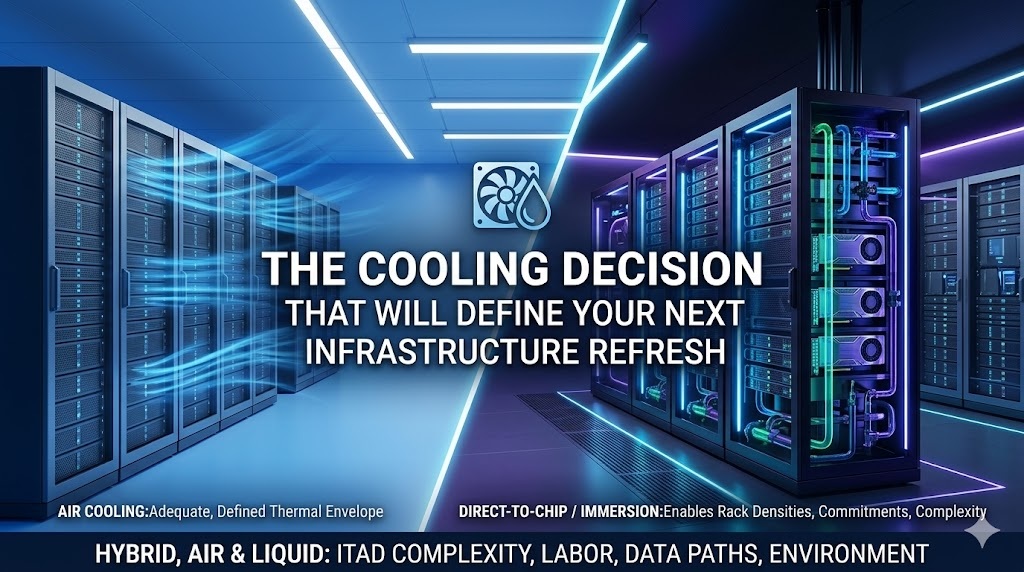

The way the market talks about cooling, you’d think the choice is simple: air cooling is legacy, liquid cooling is the future, and anyone still running CRAC units at scale is behind.

That’s wrong, and it’s costing people money.

Liquid cooling delivers real efficiency gains and enables rack densities that air simply can’t touch. It also introduces infrastructure commitments, facility dependencies, and decommission complexity that don’t show up in the vendor pitch.

The right architecture depends on what’s actually in your racks.

For most enterprise environments, the answer isn’t liquid everywhere. It’s liquid where the thermal load justifies it, and air everywhere else.

Air Cooling: Still the Default, With Real Limits

Air cooling remains the dominant architecture in most enterprise data centers. And for most non-AI workloads, it remains entirely adequate. Computer Room Air Conditioner (CRAC) and Computer Room Air Handler (CRAH) units circulate chilled air through raised floor plenums or overhead supply.

The cool air carries heat from the server rear doors. The cooling systems manage ambient temperature within a defined thermal envelope.

This infrastructure is well understood, the maintenance skills are widely available, and the equipment ecosystem (servers, switches, storage) is designed and optimized around it.

The limit is physics.

Air has roughly 800 times lower heat capacity than water by volume. Moving enough of it to handle the heat density of a modern GPU cluster requires fans running at speeds that consume significant power and generate noise. Not to mention that CRAC units themselves draw considerable energy.

According to the Association for Computer Operations Management, rack density rose from 7kW per-rack in 2021 to 16 kW per-rack in 2025, with the steepest growth in AI and hyperscale deployments. At rack densities above 30 to 40 kilowatts, air cooling becomes an increasingly expensive proposition. Above 60 kilowatts, it stops being practical in any conventional form.

For enterprise environments running mixed workloads (AI inference clusters alongside traditional compute, storage, and networking) the requirements get more complicated. The storage rows and networking gear stay well within air cooling’s range. The GPU nodes don’t.

Air-cooled hardware decommission

Decommissioning air-cooled architecture is simple. It comes out of the rack the same way it went in: No residue, no fluid handling, no contamination concerns. Servers, drives, and components are ready for testing, valuation, and either resale or responsible recycling the moment they’re powered down and removed.

For ITAD purposes, air-cooled hardware is the simplest scenario.

Direct-to-Chip Liquid Cooling: The Pragmatic Entry Point

Direct-to-chip (D2C) cooling (sometimes called cold plate cooling) delivers liquid coolant through metal plates mounted directly on high-heat components: CPUs, GPUs, and accelerators.

A closed loop runs coolant from a Coolant Distribution Unit (CDU) through rack-level piping to the cold plates and back, transferring heat to a secondary building loop or heat exchanger. The server exhaust air still carries some residual heat, typically handled by a smaller supplemental air system, but ~70-90% of the thermal load is captured directly at the chip.

D2C currently commands the majority of the liquid cooling market. Its adoption advantage is that it doesn’t require servers to be rebuilt or submerged: standard server form factors can be retrofitted or purchased liquid-ready from Dell, HPE, Lenovo, and others. NVIDIA explicitly recommends direct-to-chip cooling for its DGX and HGX H100 systems. The CDU infrastructure requires facility plumbing modifications and leak detection systems, but the transition is significantly less disruptive than immersion cooling – another proven liquid cooling method.

Direct-to-chip liquid cooling hardware decommission

At the decommission stage, D2C introduces a specific complication that air cooling doesn’t: the cold plate attachment. Cold plates are mechanically fastened to CPU and GPU packages, typically with thermal interface material (TIM) between the cold plate and the chip package.

Removing a cold plate without damaging the processor requires an understanding of the specific torque specifications and removal procedures. If technicians treat D2C servers like air-cooled servers, they’ll damage components. They’re susceptible to damage at the cold plate, the TIM layer, the processor package, or all three. Damaging a usable H100 is essentially lighting thousands of dollars on fire.

Residual coolant in the rack-side loop needs to be properly drained and disposed of before hardware is removed. This is not a complex hazardous materials situation; water-glycol mixtures are well-understood. But it requires a defined draining procedure.

Your ITAD partner should know to ask about it before the decommission crew arrives.

Immersion Cooling: Maximum Density, Maximum Transition Complexity

Immersion cooling submerges entire servers in a dielectric fluid: a non-conductive liquid that absorbs heat from every component simultaneously.

In single-phase immersion, the fluid circulates through the tank and through an external heat exchanger, remaining liquid throughout the cycle. In two-phase immersion, a lower-boiling-point fluid vaporizes as it absorbs heat, condenses in a heat exchanger above the tank, and returns as liquid. This achieves significantly higher heat transfer efficiency.

The density numbers are substantial. Single-phase immersion handles rack densities from 100 to 120 kilowatts. Two-phase systems push higher. A well-run air-cooled data center typically achieves Power Usage Effectiveness (PUE) of 1.4 to 1.6 according to AKCP. This means for every unit of energy used on compute, .4-.6 units of energy are used on cooling. Liquid cooling centers get that number much lower, to 1.1 or less.

The tradeoff is infrastructure commitment.

Immersion requires tank hardware, custom-designed watertight enclosures sized for specific server configurations, CDUs engineered for immersion, modified facility plumbing, and dielectric fluid management systems.

Standard servers may require hardware modifications before immersion: fans are typically removed, and components must be confirmed compatible with the specific dielectric chemistry being used. Intel has formally certified specific dielectric fluids for use with its Xeon processor lines. NVIDIA’s Blackwell-architecture GPUs are designed with liquid cooling, including immersion, as the intended thermal management approach.

Immersion cooling hardware decommission

Decommissioning immersion-cooled hardware is the most complicated of these three cooling architectures. Every server that comes out of the tank is coated in dielectric fluid residue. That residue needs to be cleaned off before the hardware can be accurately tested, valued, or resold. The cleaning process requires solvents or cleaning agents compatible with the specific dielectric chemistry used (fluorocarbon-based fluids, hydrocarbon-based fluids, and synthetic esters) each have different cleaning requirements and different environmental handling considerations.

Fluorocarbon-based dielectric fluids include compounds classified as PFAS: per- and polyfluoroalkyl substances, sometimes called forever chemicals. Regulatory scrutiny of PFAS is increasing in the US and EU. An ITAD vendor that has never handled immersion-cooled hardware from a PFAS-fluid system may not have the disposal pathway, the regulatory knowledge, or the facility certifications to manage that stream correctly.

This is not a corner case. It’s an active regulatory and environmental risk that becomes your problem at decommission if you haven’t asked your ITAD partner about it before the project starts.

The Hybrid Model: How Air and Liquid Work Together

The vast majority of enterprise data centers with liquid cooling are not converting entirely. In other words, liquid cooling architecture is almost synonymous with a hybrid model that uses both air and liquid cooling.

The functional logic of a hybrid model is thermal zoning. High-density AI compute clusters (GPU nodes, accelerator arrays, HPC infrastructure) are isolated in liquid-cooled zones with either D2C or immersion. The highest heat power density warrants it.

Standard compute, storage, networking, and management infrastructure stays in air-cooled zones, where the thermal load doesn’t justify the infrastructure investment or operational complexity of liquid.

Note: The NVIDIA Rubin line is not out at the time this blog published, but its kW per-rack estimates range from

| Infrastructure Type | Typical Rack Density | Recommended Cooling | Decommission Complexity |

| AI/GPU compute clusters (H100, B200, MI300X) | 80-140+ kW per rack | Direct-to-chip or immersion | Medium-high: cold plate removal, fluid drainage, dielectric cleanup |

| General-purpose compute / virtualization | 5-25 kW per rack | Air cooling | Low: standard removal, no residue or fluid handling |

| Enterprise storage arrays | 5-15 kW per rack | Air cooling | Low-medium: standard removal, straightforward valuation. Complexity depends on compliance requirements |

| Core networking / top-of-rack switching | 2-8 kW per rack | Air cooling | Low: standard removal, well-understood secondary market |

| Inference-optimized servers (lighter load GPUs) | 25-50 kW per rack | Air cooling or rear-door heat exchanger | Low-medium: rear-door HX removal, no component-level fluid contact |

The rear-door heat exchanger (RDHx) is a middle-ground technology that fits naturally into hybrid designs. A liquid-cooled panel fitted to the rear of a standard server rack absorbs heat from exhaust air before it re-enters the data hall.

RDHx doesn’t require cold plates or server modifications (existing air-cooled servers operate unchanged), but it reduces the burden on facility-level cooling infrastructure and extends the viable density range of air-cooled rows. At decommission, RDHx hardware is removed at the rack level and doesn’t require component-level fluid handling.

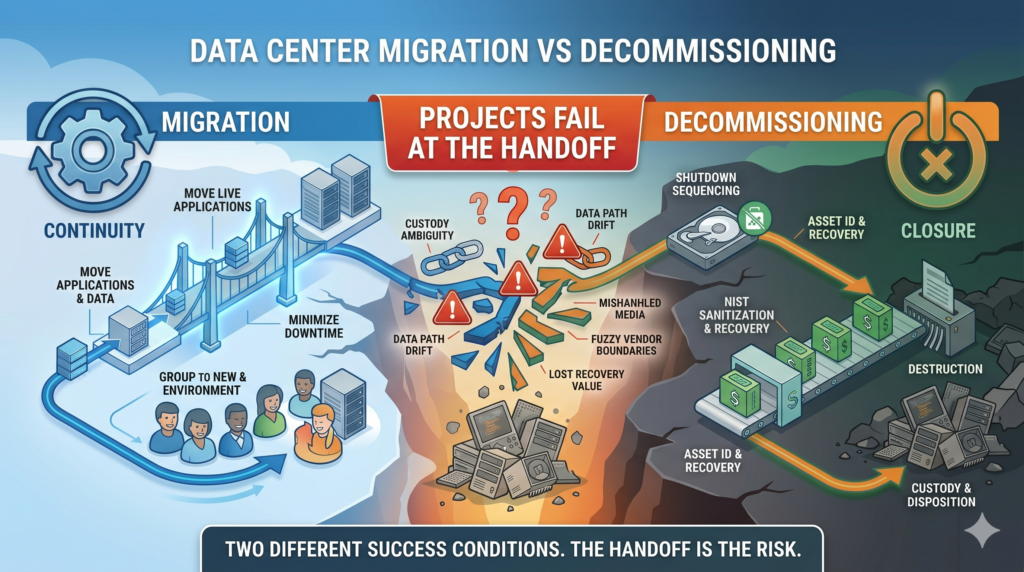

The operational challenge in a hybrid facility is that different zones require different decommission procedures, different ITAD expertise, and different documentation.

An ITAD vendor who can handle air-cooled hardware efficiently may not have the cold-plate knowledge, dielectric fluid handling capability, or environmental permits to manage the liquid-cooled zones correctly. Discuss the cooling architecture early on when planning a decommission or you’ll be stuck sorting out logistics mid-operation.

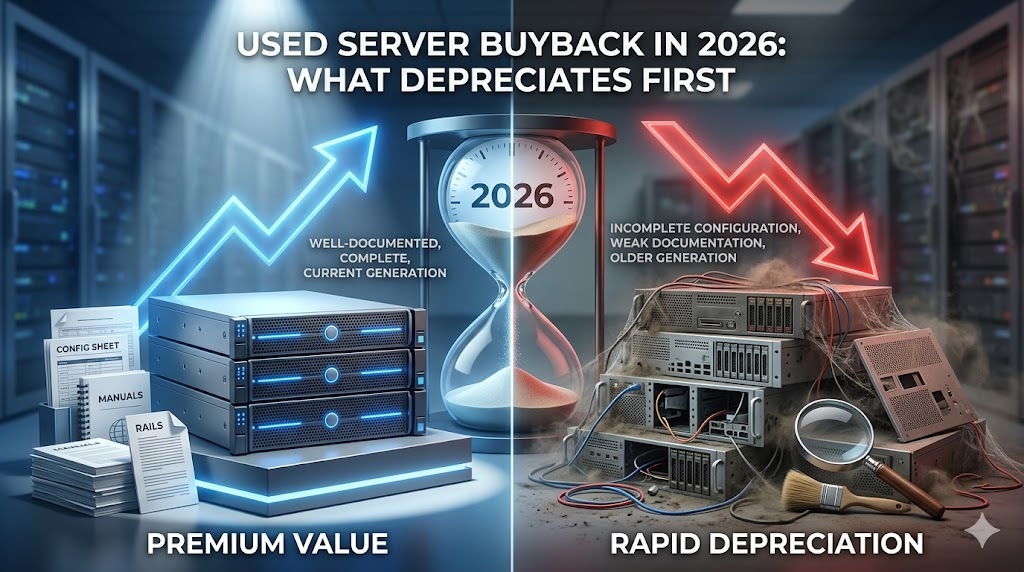

The Economics Of A Liquid Cooled Data Center Decommission

Air-cooled hardware comes out of the rack cleanly, gets tested, gets valued, and moves to the secondary market or responsible recycling on a relatively predictable timeline. The ITAD process is well understood across the industry, and recovery value is straightforward to estimate.

Direct-to-chip hardware adds labor complexity at the component level. Cold plate removal requires trained technicians who know the torque specs and removal procedure for each hardware configuration. Improperly removed cold plates damage processor packages and reduce or eliminate resale value.

An ITAD partner who has processed DGX and HGX systems before brings that knowledge. One who hasn’t will be prone to mistakes.

Immersion-cooled hardware adds fluid handling complexity on top of the component-level work. Every server requires cleaning before it can be accurately inspected or tested. The dielectric fluid chemistry determines the cleaning approach and the environmental disposal requirements.

If the facility used fluorocarbon-based fluids, the waste stream intersects with PFAS regulations that are actively evolving in both the US and EU. The ITAD vendor needs a documented disposal pathway for that stream, not a commitment to figure it out eventually.

None of these complexities make liquid cooling the wrong choice.

The thermal performance demands, especially for AI hardware components, are a perfect fit for liquid cooling. But there are costs that come with liquid cooling, and your data center teams need to think of this from the beginning. Plan for the added decommission when you install the cooling architecture, not when the refresh cycle arrives.